The Checkbox Category Trap: How to sell when every competitor claims the same thing

When everyone has “cost optimization", the only defensible edge is proof fast.

I met the founders in early 2024. One was a seasoned CEO, not new to startups, and not romantic about the work. He knew GTM isn’t a slide deck you build later. It’s the thing that decides whether you live long enough to matter. The second was a sharp data engineer with real senior roles behind him. When they reached out, they had a product taking shape, a few design partners, and the same fear every infrastructure startup eventually bumps into: big data is crowded, loud, and expensive to enter. If you don’t show up with a clear position, you get erased.

What they built looked simple on the surface: an AI agent for end‑to‑end data pipeline efficiency, starting where modern data spend actually happens. You connect it, and it maps what’s running, what it costs, what it touches, and what it’s doing to your warehouses and workloads. It doesn’t just tell you “spend is up.” It shows you where the waste lives, down to the parts data teams actually control: queries, pipelines, schedules, and usage.

The tricky part was that “cost optimization” wasn’t a category. It was a checkbox. Every serious data platform had a cost story. The big cloud players had their own dashboards. The observability vendors had modules. And the FinOps players already owned mindshare. On paper, it all sounded the same: visibility, savings, controls. In reality, this team was coming from the product side. They could analyze pipelines and SQL and tell you *why* a workload was expensive, *who* was driving it, and *what* to change. That’s a very different thing than tagging cloud spend and drawing charts. But explaining that difference in one breath? Hard.

Time capsule: 2024: the data cloud matured, and the bills kept climbing.

The shift to the data cloud started with a promise: flexibility, speed, and yes - cost savings. But by 2024, the pattern had flipped. Companies didn’t just “move to the cloud.” They multiplied everything inside it: more pipelines, more transformations, more dashboards, more compute flavors, more teams shipping data products. Self‑serve analytics became the default. “Everyone can query” became a badge of honor. And the result was predictable: usage grew faster than governance.

Most organizations found themselves paying for data and code they couldn’t even explain anymore. Tables no one was sure were used. Jobs no one owned. Dashboards built on top of assets nobody trusted. And because the cloud makes it easy to keep running, the waste doesn’t show up as a broken system. It shows up as a slow leak. You don’t feel it until Finance asks why the bill doubled.

That’s when the conversation changed. Cost stopped being a back‑office concern. It became a credibility problem for data leaders. If you couldn’t connect spend to value, you weren’t “data‑driven.” You were just expensive.

The challenge: standing out in a market that doesn’t leave room for small players.

This space is brutal. Big vendors spend real money to own the narrative. And the buyers, data architects, data engineering leaders, are allergic to marketing language. They love new technology. They hate vague promises. If you show up with generic cost claims, they’ll lump you into the same bucket as everyone else and move on.

So the company had two problems at once. First: the category was noisy, and “cost optimization” sounded like table stakes. Second: the audience demanded technical truth, not sales polish. The usual playbook bigger claims, prettier decks would backfire.

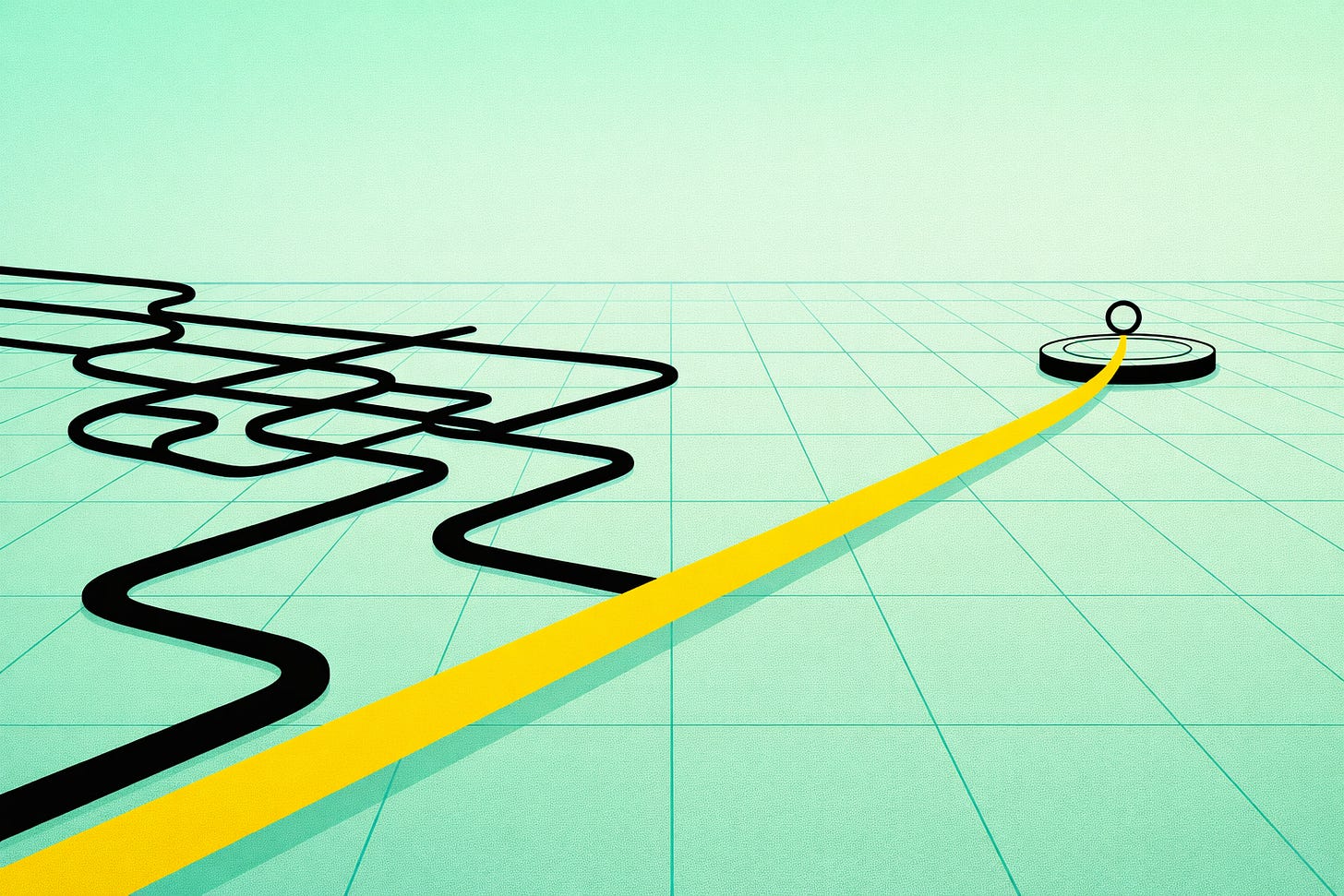

The shift: from “cost optimization” to “pipeline efficiency you can prove fast”.

The turning point wasn’t new code. It was a positioning decision: stop trying to win the cost category war on slogans. Instead, win on speed of value. Make the first interaction feel like engineering, not marketing. Give data leaders something they respect: a clear diagnosis of what’s happening in their workloads, and an actionable path to fix it.

The message moved from “we help you save money” to something sharper: you’re paying for pipelines and assets you don’t even know you’re running and we can show you where, before this becomes your next budget firefight.

The GTM play: look bigger than you are by acting like the authority.

They leaned into a few moves that compound early:

Thought leadership that speaks engineer. Less “ROI content,” more real insight: how waste forms in modern warehouses, why lineage matters for cost, how to find unused assets, how to stop runaway workloads before the invoice arrives.

Positioning discipline. They didn’t try to sound like a suite. They carved out a point of view: cost is a symptom; efficiency is the control surface.

Start GTM early. They didn’t wait for the “perfect product.” They went into the market to learn language, objections, and buying signals while the roadmap was still flexible.

Go where the audience already listens. Data teams don’t hang out in procurement documents. They hang out in communities, conferences, and technical circles. So the team showed up there—and spoke the way data people speak.

Within a year, they went to the major Snowflake conference and came back with months of pipeline, real accounts, real cycles, real deals.

Founder takeaway

In crowded infrastructure markets, your product won’t be judged by your feature list. It will be judged by how fast you can create trust.

Start GTM earlier than feels comfortable. Pick a clear differentiation you can explain in one breath. Make sure the product delivers on the promise because engineers will test you. And don’t waste time shouting into channels your audience ignores. Go to the places they respect, speak their language, and make the value obvious fast.

That’s how small teams survive in big markets.

Not by being louder. By being undeniable.